Neil Gaiman, as quoted by Cal Newport: “people are leaving [social media]. You know, Twitter is over, yeah Twitter is done, Twitter’s… you stick a fork in, it’s definitely overdone. The new Twitters, like Threads and Bluesky… nothing is going to do what that thing once did. Facebook works but it doesn’t really work. So I think probably the era of blogging may return and maybe people will come and find you and find me again.”

If you don’t shut up I’m gonna give you such a

writing about the Beatles

[I’m taking this one down — didn’t intend to make an enemy, but evidently that’s what I did. And it’s just a blog post after all, no loss to the world.]

The blur

A brief explanation of how, when I teach a class, I try to have a structure and a story.

two summative thoughts about AI

One: There was until recently a battle for the soul of AGI research and development, a battle between the stewards and the exploiters. The stewards understand themselves to be the duty-bound custodians of an ever-more-enormous power; the exploiters are interested in using that power to make themselves rich and powerful. Had the stewards managed to retain control, or even influence, then I would have been willing to keep a cautiously hopeful eye on developments. However, the stewards have been routed and only the exploiters remain. (OpenAI’s dismissal of Sam Altman was effectively The Stewards’ Last Stand.) I therefore consider it necessary to refuse any use of AI in any circumstances that I can control.

Two: The powers of law are being summoned by people who see the exploiters as I do, which I guess is a good thing, but … in our society, can anyone as rich as the tech companies behind AGI lose, either in the courts or through legislation? I don’t see how they can. Everyone who stands in their way can be bought, and most of them are pleading to be bought. (Similarly, in Premier League football, Everton is small enough to be smacked down but I cannot imagine Manchester City or Chelsea ever suffering any penalty, no matter how grossly they have defied the financial rules.) As Dana Gioia taught us long ago,

Money. You don’t know where it’s been, but you put it where your mouth is. And it talks.

structure and story

I regularly teach in the Great Texts program here in Baylor’s Honors College, which is based on the old University of Chicago model pioneered by – or anyway most fully developed - by Robert Maynard Hutchins and Mortimer Adler. Usually such courses are period-based, but draw on many genres of writing: fiction, poetry, drama, philosophy, theology, political theory, etc. For reasons I won’t go into here, but will probably write about one day, any such interdisciplinary course in the humanities has a natural tendency to be governed by the concerns of political philosophy; questions about how we human beings should live a common life can be discerned in pretty much everything we read. It requires a conscious effort from the teacher not to let political philosophy govern the entire course, though it probably should be structurally dominant.

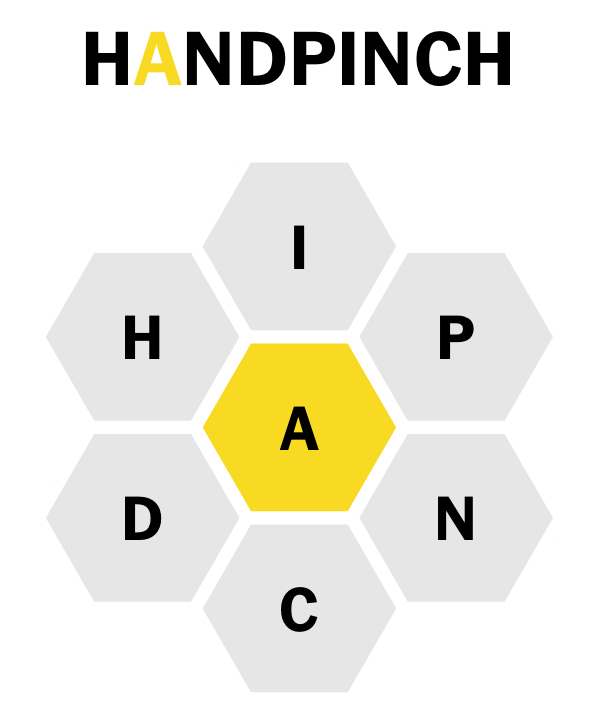

In order to teach a class like this well, I think, you need a structure and a story. Right now I’m teaching the 19th century: Burke (yes, I know, he’s at the end of the previous century), Austen, Kierkegaard, Mill, George Eliot, Marx & Engels, Nietzsche, Dostoevsky. A motley crew! Which is why you need a structure, or, to be more precise, a strategy of heuristic simplification. Mine looks like this:

First, I divide the writers and thinkers of the era into three large groups:

- the reactionaries

- the meliorists

- the revolutionaries

We’re probably not reading any genuine reactionaries in this class – people like Joseph de Maistre for instance – because their influence in their own time was not great. (Their influence on the 20th century is much greater.) I say we’re probably not reading any reactionaries because the case can be made that Dostoevsky is a reactionary, but I prefer to think of him as a revolutionary. More on that later.

Much of the first half of the course is devoted to the great English meliorist tradition, the intellectual world contested by conservative meliorists (Burke, Austen) and liberal meliorists (Mill, Eliot). Then we turn our attention in the latter part of the term to more radical figures, some of whose concerns had been anticipated by Kierkegaard.

So we’re focusing on thinkers and artists who believe that the social order needs to be changed, but differ about whether that change should be pursued by gradual or dramatic means. And they differ in other respects too, for instance:

- the reasons change is needed

- the arena in which change should primarily be pursued

- the means by which change should be pursued

What do I mean by “arena”? Perhaps I can illustrate by referring to my revolutionaries:

- Marx & Engels give their attention to the arena of political economy

- Nietzsche’s primary arena is intellectual and moral formation

- Dostoevsky’s arena is the world of spiritual warfare (political economy and intellectual life being, for him, downstream of spiritual matters)

Another and simpler way to put this is to say that revolutionaries (like meliorists!) may want revolutions in systems and institutions or in hearts and minds – and we may note that if you’re focused on the former you’ll probably write treatises, while if you’re focused on the latter you’ll probably write novels. (Though George Eliot, maybe more than any other 19th-century writer with the possible exception of Tolstoy, manages to maintain a double focus in several of her books, most dominantly Middlemarch.)

That’s the structure I employ in this course. And from that structure emerges the story I tell. I leave it as an exercise for the reader to decide what that story is likely to be.

Matthew Butterick: “If AI companies are allowed to market AI systems that are essentially black boxes, they could become the ultimate ends-justify-the-means devices. Before too long, we will not delegate decisions to AI systems because they perform better. Rather, we will delegate decisions to AI systems because they can get away with everything that we can’t. You’ve heard of money laundering? This is human-behavior laundering. At last — plausible deniability for everything.”